File sync and file copy duration predictions suck. At least they do everywhere I have encountered them, which is mostly on Windows systems. I've done my share of *nix and Linux, but it never involved massive copying of data. Every time I switch computers this is the "task du jour" though, and it invariably involves getting annoyed about the unstable and unreliable predictions of how much time there's left to backup and/or verify my data. That's been the case for some 20+ years now, so it's about time things changed.

At least the latest version of

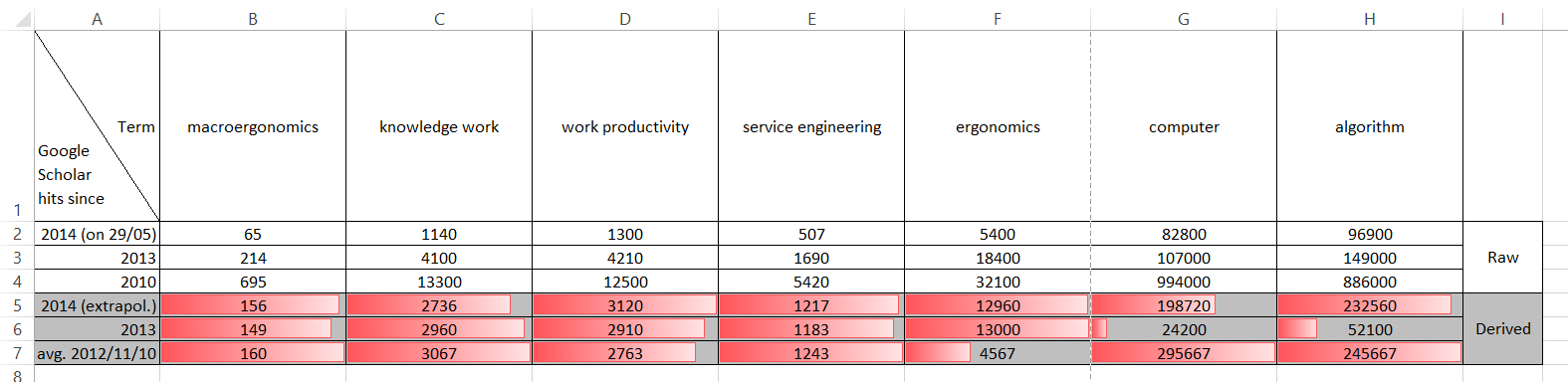

FreeFileSync shows traces of improvement - it essentially offers two parallel "burndown" charts, one for the volume of data (raw MBs / GBs / TBs), and one for the raw number of files. I've posted a screenshot of my ongoing "XP netbook backup in preparation of new OS" operation below.

Unlike the

official screenshot of the feature, which is unrepresentatively small and linear, a typical sync copy operation involves many gigabytes worth of data, stored in thousands of files of very different sizes. As a result, you will have areas where your typical "bytes per second" volume metric drops because the folder being processed is dominated by tons of tiny files, and the hard disk's seek time becomes the bottleneck. The other extreme are areas with gigabyte-sized files, where transfer just streams through (somewhat depending on fragmentation), and the bottlenecks are read, write and transfer bandwidths.

It's in the former areas that classical "predictors" often forecast days and months of copy delay, disregarding the fact that the "tiny file" count also needs to be burned down at some point, and that storage size / volume throughput doesn't matter too much in these areas.

What does count for the end-user is when the overall operation is finished, which is not when the unstable, throughput-biased predictor thinks it is.

So what can be done? It should be really simple, given that every sync/copy code I know indexes the work ahead of the actual op. So it can know the file size distribution in advance, and can easily relate it to the actuals measured as it goes along. So instead of only looking at the current throughput (bytes per second) and extrapolating its local value until the bitter end, it could:

- look at the "files per second" throughput in parallel, extrapolate this as well and average the result - however, that's still locally biased and implicitly assumes that byte throughput and file throughput are equally relevant to the runtime

- assess current bytes/second and files/second, and compute a weighted average prediction based on how many files and how many bytes are still left to go - still locally biased, and somewhat dependent on the balance in the remaining population of files, but at least it's a first step of improvement

- compute weighted extrapolations based on the entire work done so far, both along the "files" and "bytes" dimension - more complex, but less local than 2.

- compute some simple linear regressions: time_left = f(file_size, file_count) - sounds fancy, but it's not really that much more sophisticated than dumb extrapolation!

- compute optimal estimates with other fancy algorithms - most fun for the data scientist in me, and promising best predictive value, but it needs a good data basis for model optimisation. Anyone interested in shooting me detailed logs for crunching? :-)

Even the best sync software (e.g. FreeFileSync) doesn't do this well right now - in a job running as I write this, it insists on needing two more days for a verify op that has so far taken 3 hours for 25 % of the volume. Don't know about you, but I'd rather expect to have 9 hours left to wait...

Rant over, let's get this fixed!